A Teams invitation lands in my inbox — for a meeting I never accepted. “Please confirm your attendance at microsoft.com/devicelogin with the code JK7QH9XW.” The URL is real. The code is real. If I type it in, my passkey authenticates a perfectly legitimate login — and the resulting access token belongs to the attacker.

This is how Storm-2372 operates — a group attributed with moderate confidence to Russia, working its way through Microsoft 365 tenants since August 2024. By March 2026, The Hacker News had documented 340+ affected organisations across five countries. The technique has a name that sounds harmless: device code phishing.

What the device code flow is actually for

The device code flow is a sign-in method for devices that can’t easily ask you to type a password — smart TVs, IoT devices, command-line tools, the display in the meeting room. It splits the login across two devices. The constrained device starts the flow and shows a short code. The user then opens microsoft.com/devicelogin on their phone or laptop, enters the code, and authenticates there as usual. Once they confirm the login, the constrained device receives its access token in the background and is signed in.

In practice, this is how the Azure CLI signs you in, how kubectl connects to a cluster behind Entra, how the conference-room display joins a Teams meeting. It’s a real, useful flow.

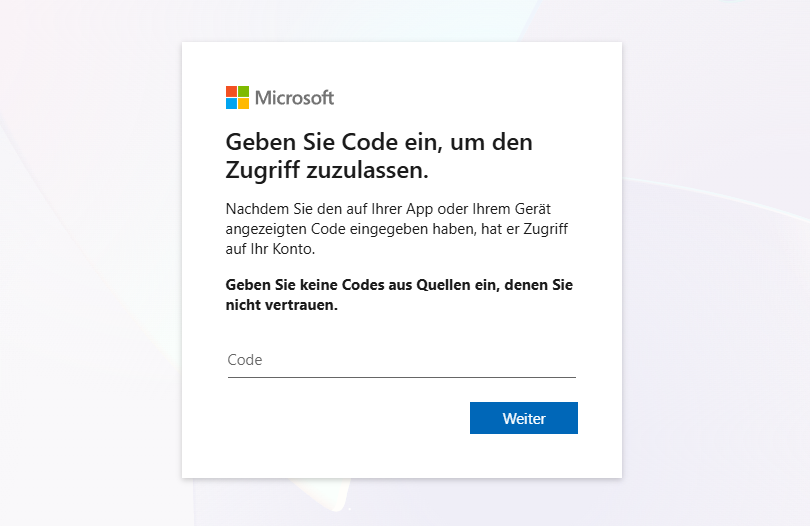

The page the user lands on at

The page the user lands on at microsoft.com/devicelogin. Localised to the browser language

Microsoft prints a phishing warning right on this page: “Geben Sie keine Codes aus Quellen ein, denen Sie nicht vertrauen.” — don’t enter codes from sources you don’t trust. The warning is honest, and it names the problem in one sentence. At the moment of consent, the flow has nothing to fall back on except the user’s own judgement.

Why FIDO2 doesn’t help here

Passkeys are phishing-resistant because they’re bound to the exact domain of the login page. That defends against fake Microsoft pages: a passkey simply won’t sign anything against microsoft-login.com. But in the device code flow, there is no fake domain. The user really is on microsoft.com/devicelogin, the browser really is talking to Microsoft, MFA really is being satisfied. The login is genuine.

The weakness isn’t in the login itself. It’s in the step that follows: consent. The user enters the code thinking they’re authorising their own device. The flow has no way to tell them otherwise. And because the attacker started the flow on their own machine, they receive the device code — which means the resulting token lands in their session.

From Entra’s perspective, the sign-in looks immaculate afterwards: successful, MFA-confirmed, passkey-signed. In the logs, only the authentication method deviceCode stands out — and most tenants don’t monitor it, because it’s rare.

What the attacker gets

A successful device code phish yields an access token valid for about an hour, and — far more useful — a refresh token that, depending on tenant configuration, can stay valid for up to 90 days and be exchanged for new tokens with new permissions. With those, the attacker has whatever the victim has.

Storm-2372 has been observed doing exactly what you’d expect: using the Microsoft Graph API to search the victim’s mailbox for keywords like password, admin, secret, teamviewer, anydesk, ministry, gov, then exfiltrating the matching messages. Lateral movement happens from there through email sent from the now-compromised account to internal contacts. The second wave is far more successful, because the lure now comes from a trusted address inside the victim’s own organisation.

There’s no phishing kit to fingerprint, no malicious domain to block, no lookalike infrastructure to take down. From a defender’s perspective, the entire attack happens inside Microsoft’s own legitimate flow. Many admins aren’t aware of this weakness and assume — once passkeys are in use — that no login is possible without access to them.

What actually helps

Microsoft made the conditional access condition Authentication Flows generally available in 2024. A policy that blocks the device code flow ends the problem for most tenants — device code isn’t used in normal Office workflows. Legitimate use cases (conference-room displays, occasional CLI tools) can be carved out with user exclusions. Since February 2025, there’s also a Microsoft-managed policy that enables this block for tenants that don’t use device code at all.

The policy itself takes about five minutes to set up:

- In the Microsoft Entra admin center, go to Protection > Conditional Access > Policies.

- Click New policy and give it a name, e.g. Block Device Code Flow.

- Under Assignments > Users, include All users. Exclude service accounts, break-glass accounts that need device code, and users with CLIs that depend on it.

- Under Target resources, choose All resources (formerly All cloud apps).

- Under Conditions > Authentication flows, set Configure to Yes and select Device code flow.

- Under Access controls > Grant, select Block access.

- Set Enable policy to Report-only first. After a few days, check the sign-in logs for blocked sign-ins that turn out to be legitimate, add the affected users to the exclusion list, then flip the policy to On.

Microsoft has a step-by-step walkthrough for the same procedure if you want to compare against an officially documented click path.

Awareness training is the second line, not the first. Two years into this campaign, “tell your staff not to enter codes” is a slogan, not a control. Trained staff click anyway when the lure comes from a colleague’s compromised mailbox — and the next wave is already AI-generated and tailored to the tenant.

I’ll read the next Teams invitation in my inbox knowing that my passkey can’t tell a friendly code from an unfriendly one — and that conditional access protects me anyway.